Attention-selling business models have incentives for fostering unhealthy addictive behavior. They are refining online interactions into the equivalent of digital heroin: experiences that are highly pleasurable, highly addictive, and in the long run seriously harmful. My goal is to offer several perspectives on certain business models that encourage a “race to the bottom” and provide some suggestions for how to correct or at least manage the problem.

Superstimulus Is Not New, and It’s Not Just a Problem for Digital Experiences

A candy bar is a superstimulus: it contains more concentrated sugar, salt, and fat than anything that exists in the ancestral environment. A candy bar matches taste buds that evolved in a hunter-gatherer environment, but it matches those taste buds much more strongly than anything that actually existed in the hunter-gatherer environment. The signal that once reliably correlated to healthy food has been hijacked, blotted out with a point in tastespace that wasn’t in the training dataset – an impossibly distant outlier on the old ancestral graphs. Tastiness, formerly representing the evolutionarily identified correlates of healthiness, has been reverse-engineered and perfectly matched with an artificial substance. Unfortunately, there’s no equally powerful market incentive to make the resulting food item as healthy as it is tasty. We can’t taste healthfulness, after all.”

Eliezer Yudkowsky in “Superstimuli and the Collapse of Western Civilization” (2007)

Tobacco, alcohol, cocaine, and opium are established mood inducers that can also foster addictive behavior, offering additional analogs for digital products (“digital heroin”) that hijack the brain’s reward system.

Superstimulus: Refining Online Interactions into Digital Heroin

At least three people have died playing online games for days without rest. People have lost their spouses, jobs, and children to World of Warcraft. If people have the right to play video games – and it’s hard to imagine a more fundamental right – then the market is going to respond by supplying the most engaging video games that can be sold, to the point that exceptionally engaged consumers are removed from the gene pool.

How does a consumer product become so involving that, after 57 hours of using the product, the consumer would rather use the product for one more hour than eat or sleep?

I don’t know all the tricks used in video games, but I can guess some of them – challenges poised at the critical point between ease and impossibility, intermittent reinforcement, feedback showing an ever-increasing score, social involvement in massively multiplayer games.

Is there a limit to the market incentive to make video games more engaging? You might hope there would be no incentive past the point where the players lose their jobs; after all, they must be able to pay their subscription fee. This would imply a “sweet spot” for the addictiveness of games, where the mode of the bell curve is having fun, and only a few unfortunate souls on the tail become addicted to the point of losing their jobs. But video game manufacturers compete against each other, and if you can make your game 5% more addictive, you may be able to steal 50% of your competitor’s customers.

Eliezer Yudkowsky in “Superstimuli and the Collapse of Western Civilization” (2007)

There is a clear risk for a “race to the bottom” where the damage that a product inflicts lead to short to term profits but long-term misery.

Hard Liquor, Cigarettes, and Heroin: More Concentrated Forms of Less Addictive Predecessors

“What hard liquor, cigarettes, heroin, and crack have in common is that they’re all more concentrated forms of less addictive predecessors. Most if not all the things we describe as addictive are. And the scary thing is, the process that created them is accelerating.

We wouldn’t want to stop it. It’s the same process that cures diseases: technological progress. Technological progress means making things do more of what we want. When the thing we want is something we want to want, we consider technological progress good. If some new technique makes solar cells x% more efficient, that seems strictly better. When progress concentrates something we don’t want to want—when it transforms opium into heroin—it seems bad. But it’s the same process at work.

No one doubts this process is accelerating, which means increasing numbers of things we like will be transformed into things we like too much.”

Paul Graham in “The Acceleration of Addictiveness” (2010)

Graham’s critique echoes Yudkowsky’s and also warns of an accelerating range of risks.

“I’ve avoided most addictions, but the Internet got me because it became addictive while I was using it. People commonly use the word “procrastination” to describe what they do on the Internet. It seems to me too mild to describe what’s happening as merely not-doing-work. We don’t call it procrastination when someone gets drunk instead of working.”

Paul Graham in “The Acceleration of Addictiveness” (2010)

His personal recipe is conscious withdrawal–e.g. taking long hikes–to avoid the risk of addiction. Kicking digital heroin requires a digital withdrawal. Clay Johnson’s “Information Diet” frames this a positive injunction to pay attention to the information that you are consuming.

American Society of Addiction Medicine: Definition of Addiction

Addiction is a primary, chronic disease of brain reward, motivation, memory and related circuitry. Dysfunction in these circuits leads to characteristic biological, psychological, social and spiritual manifestations. This is reflected in an individual pathologically pursuing reward and/or relief by substance use and other behaviors.

Addiction is characterized by inability to consistently abstain, impairment in behavioral control, craving, diminished recognition of significant problems with one’s behaviors and interpersonal relationships, and a dysfunctional emotional response. Like other chronic diseases, addiction often involves cycles of relapse and remission. Without treatment or engagement in recovery activities, addiction is progressive and can result in disability or premature death.

American Society of Addiction Medicine: Definition of Addiction

This is an escalating series of problems: “inability to consistently abstain, impairment in behavioral control, craving, diminished recognition of significant problems with one’s behaviors and interpersonal relationships, and a dysfunctional emotional response.” The last two are tripwires that you should not ignore.

Roger McNamee: Facebook and YouTube Revenue Depends on Gaining and Maintaining Attention

I invested in Google and Facebook years before their first revenue and profited enormously. I was an early adviser to Facebook’s team, but I am terrified by the damage being done by these Internet monopolies.

Facebook and Google get their revenue from advertising, the effectiveness of which depends on gaining and maintaining consumer attention. Borrowing techniques from the gambling industry, Facebook, Google and others exploit human nature, creating addictive behaviors that compel consumers to check for new messages, respond to notifications, and seek validation from technologies whose only goal is to generate profits for their owners.

The people at Facebook and Google believe that giving consumers more of what they want and like is worthy of praise, not criticism. What they fail to recognize is that their products are not making consumers happier or more successful. Like gambling, nicotine, alcohol or heroin, Facebook and Google — most importantly through its YouTube subsidiary — produce short-term happiness with serious negative consequences in the long term. Users fail to recognize the warning signs of addiction until it is too late.

Roger McNamee: I invested early in Google and Facebook and regret it (2017)

Like alcohol or gambling, addiction is probably inherent in the product for people with a genetic predisposition to certain types of addictive behavior. My hypothesis is that the level of “dopamine-kick” is at least partially a function of genes and probably partially a function of nurture. For example, there are some people who cannot drink socially, encouraging them to have one drink “to relax with the rest of us” may cause them harm. To the extent that you are designing your application to consume more and more of the user’s attention (note that the customer is the advertiser, the addicted user is the product), to make it more and more addictive, you may be ruining some people’s lives.

As a developer, you may feel that all technologies and services have side effect: the same Internet that enabled telecommuting also accelerated offshoring, self-driving cars may remove the need for a suicidal terrorist to deliver a car bomb, cell phones allow you to get roadside help if your car breaks down but if you use them while you are driving it increases your chance of an accident.

If your business model creates a direct goal is to consume more and more of your user’s attention–so that you can sell it to your customers–and you don’t place any limits on how much you will “harvest” you are on a path to destroying people’s lives. Even if your business model does not profit from addictive behavior, it’s worth monitoring for signs of addiction as Netflix and Hulu do. I believe the goal of developing an “addictive app” is similar to developing digital heroin: likely to be highly profitable but ethically and morally bankrupt. I think McNamee is offering a direct and specific ethical test for anyone developing applications.

Tristan Harris Goes Undercover in the Sausage Factory

“YouTube wants to maximize how much time you spend. And so what do they do? They autoplay the next video. And let’s say that works really well. They’re getting a little bit more of people’s time. Well, if you’re Netflix, you look at that and say, well, that’s shrinking my market share, so I’m going to autoplay the next episode. But then if you’re Facebook, you say, that’s shrinking all of my market share, so now I have to autoplay all the videos in the newsfeed before waiting for you to click play. So, the Internet is not evolving at random. The reason it feels like it’s sucking us in the way it is because of this race for attention.We know where this is going. Technology is not neutral, and it becomes this race to the bottom of the brain stem of who can go lower to get it.”

Tristan Harris in “The manipulative tricks tech companies use to capture your attention” (2017)

The contrast with subscription services like Netflix or Hulu is instructive. If you are binge watching your favorite series both services will put up an interstitial between every third or fourth episode asking if you are still watching and in Hulu’s case, suggesting you take a break. Because they make their money by getting subscribers to renew they want them to be delighted with the service but they are not committing to absorbing every scrap of your free time to sell ads to their customers.

Outrage and Righteous Indignation are also Addictive

Outrage and Righteous Indignation are also Addictive

Now, if this is making you feel a little bit of outrage, notice how that thought just comes over you. Outrage is a really good way also of getting your attention, because we don’t choose outrage. It happens to us.And if you’re the Facebook newsfeed, whether you’d want to or not, you actually benefit when there’s outrage. Because outrage doesn’t just schedule a reaction in emotional time, space, for you. We want to share that outrage with other people. So, we want to hit share and say, “Can you believe the thing that they said?” Outrage works really well at getting attention, such that if Facebook had a choice between showing you the outrage feed and a calm newsfeed, they would want to show you the outrage feed, not because someone consciously chose that, but because that worked better at getting your attention. And the newsfeed control room is not accountable to us. It’s only accountable to maximizing attention. It’s also accountable, because of the business model of advertising, for anybody who can pay the most to actually walk into the control room and say, “That group over there, I want to schedule these thoughts into their minds. “So you can target, you can precisely target a lie directly to the people who are most susceptible. And because this is profitable, it’s only going to get worse.”

Tristan Harris in “The manipulative tricks tech companies use to capture your attention” (2017)

This is more troubling and has clearly accelerated in the United States since Tristan Harris presented this in 2017. There are implications for the body politic beyond my focus in this post on addictive digital applications but, at a minimum, the new content providers are replacing traditional journalists without accountability or a code of ethics.

Countering Addiction with Mindfulness and Explicitly Healthy Behaviors

“My latest trick is taking long hikes. I used to think running was a better form of exercise than hiking because it took less time. Now the slowness of hiking seems an advantage, because the longer I spend on the trail, the longer I have to think without interruption.

Sounds pretty eccentric, doesn’t it? It always will when you’re trying to solve problems where there are no customs yet to guide you. Maybe I can’t plead Occam’s razor; maybe I’m simply eccentric. But if I’m right about the acceleration of addictiveness, then this kind of lonely squirming to avoid it will increasingly be the fate of anyone who wants to get things done. We’ll increasingly be defined by what we say no to.”

Paul Graham in “The Acceleration of Addictiveness” (2010)

I wonder if we won’t also see the equivalent of dosimeter badges and dive computers that alert us to our attention getting hijacked. There are already apps that allow you to block access to websites, I am thinking of something more like MyFitnessPal to allow you to track where you are spending your time across web and phone. Something like Freedom may be a useful start on this. This is probably a fruitful category for the Quantified Self movement to explore.

I sharply restrict my use Facebook to a few minutes every two or three weeks to keep track of what distant friends and family are up to (ignoring the 3-4 emails a day it sends that say “did you see what your friend John did?”). I don’t run YouTube in continuous play mode. And I try to resist the temptation to correct–for their own good of course–someone who is wrong on the Internet. I think the abuses will get fixed through a combination of increased awareness, specific regulation targeting certain dark patterns (in the same way that unsolicited commercial email, junk faxes, and do not call lists were created), and applications are introduced that help us manage our attention and tendencies to different kinds of addictive behavior.

For “Defense against the Dark Arts” see DarkPatterns.Org and “App Ratings” at Time Well Spent.

“Tell me what you pay attention to and I will tell you who you are.”

Jose Ortega y Gasset

If You are Developing an Application, Take this Test

Does your product:

- Honor both on and off-screen possibilities?

- Make it easy to disconnect?

- Enhance relationships, or keep people isolated?

- Respect people’s schedules and boundaries?

- Help people “get life well lived” ?

- Land specific, “net positive” benefits in people’s lives?

- Minimize misinterpretations and empower truth-seeking?

- Eliminate detours and distractions?

Center for Humane Tech: Calling All Technology Designers

Joe Edelman has done quite a bit to provide constructive guidance to developers, not only about attention management but aligning with customer’s values and quality of life:

- Four Ideas for Better Human Systems

- Can Software Be Good For Us? (Original Title: “Dear Zuck (and Facebook Product Teams)”

- How to Design Social Systems (Without Causing Depression and War)

For Further Reading

- 1843 Magazine: Scientists Who Make Apps Addictive “These days, of course, we all carry slot machines in our pockets.”

- Tristan Harris: How Technology Hijacks People’s Minds?—from a Magician and Google’s Design Ethicist

- Information Diet / Clay Johnson http://informationdiet.com/ and https://player.vimeo.com/video/63368251

- Time Well Spent / Tristan Harris http://www.timewellspent.io/

- Brad Berens: Facebook Needs a Surgeon General’s Warning

“The tobacco companies want people to smoke more because it’s good for business, even though cigarettes make people sick. Likewise, Facebook’s goal is not to make its users happy; the goal is to make its users use Facebook more. One day soon we might see a screen pop up when we log into Facebook that reads, ‘WARNING: Facebook Use May Be Hazardous to Your Happiness.’” - Maciej Ceglowski, founder of Pinboard, gave a great talk on Sep-14-2014 “What Happens Next Will Amaze You”

“It took a long time to establish that environmental smoke exposure was harmful, and even longer to translate this into law and policy. We had to believe in our capacity to make these changes happen for a long time before we could enjoy the results. I use this analogy because the harmful aspects of surveillance have a long gestation period, just like the harmful effects of smoking, and reformers face the same kind of well-funded resistance. That doesn’t mean we can’t win. But it does mean we have to fight.”

Related Blog Posts

- Pearl Harbor to 9-11 to the Panopticon

- Bill Davidow on Silicon Valley Values

- Deprecating Facebook

- Deprecating Facebook Part Two

- Deprecating Facebook 2: Marcelo Rinesi’s Perspective

Credits

- Photo Credit (c) BeamWise, Inc. (used with permission).

- Cartoon Credit: “Duty Calls” (Randall Munroe)

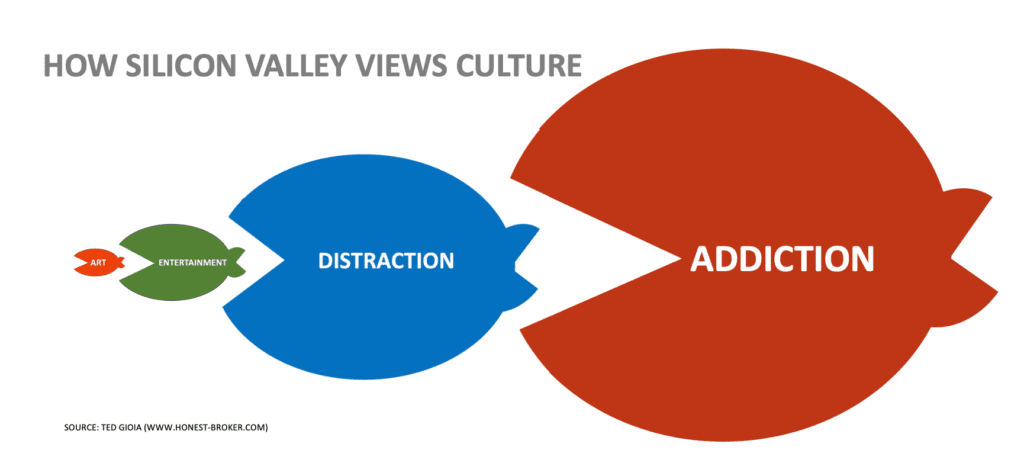

- Image Credit: “Art-Entertainment-Distraction-Addiction” by Ted Goia; used with attribution.

Postscript: based on suggestion left by Brad Pierce I have also published this on

- Medium: https://medium.com/@skmurphy/superstimulus-refining-online-interactions-into-digital-heroin-de6c293fbecb

- LinkedIn: https://www.linkedin.com/pulse/superstimulus-refining-online-interactions-digital-heroin-sean-murphy/

Brad has also blogged about this in “The Mortal Enemy of Creativity is Entertainment”

Update Mon-Jun-4: Apple today announced “iOS 12 introduces new features to reduce interruptions and manage Screen Time.”

“In iOS 12, we’re offering our users detailed information and tools to help them better understand and control the time they spend with apps and websites, how often they pick up their iPhone or iPad during the day and how they receive notifications. We first introduced parental controls for iPhone in 2008, and our team has worked thoughtfully over the years to add features to help parents manage their children’s content. With Screen Time, these new tools are empowering users who want help managing their device time, and balancing the many things that are important to them.”

Craig Federighi, Apple’s senior vice president of Software Engineering.

Update Sun-Feb-18-2024 In “State of the Culture 2024” Ted Goia outlines the evolution from art to entertainment to distraction to addiction. Well worth reading.